这篇文章主要介绍Kubernetes网络分析中如何实现Container间通信,文中介绍的非常详细,具有一定的参考价值,感兴趣的小伙伴们一定要看完!

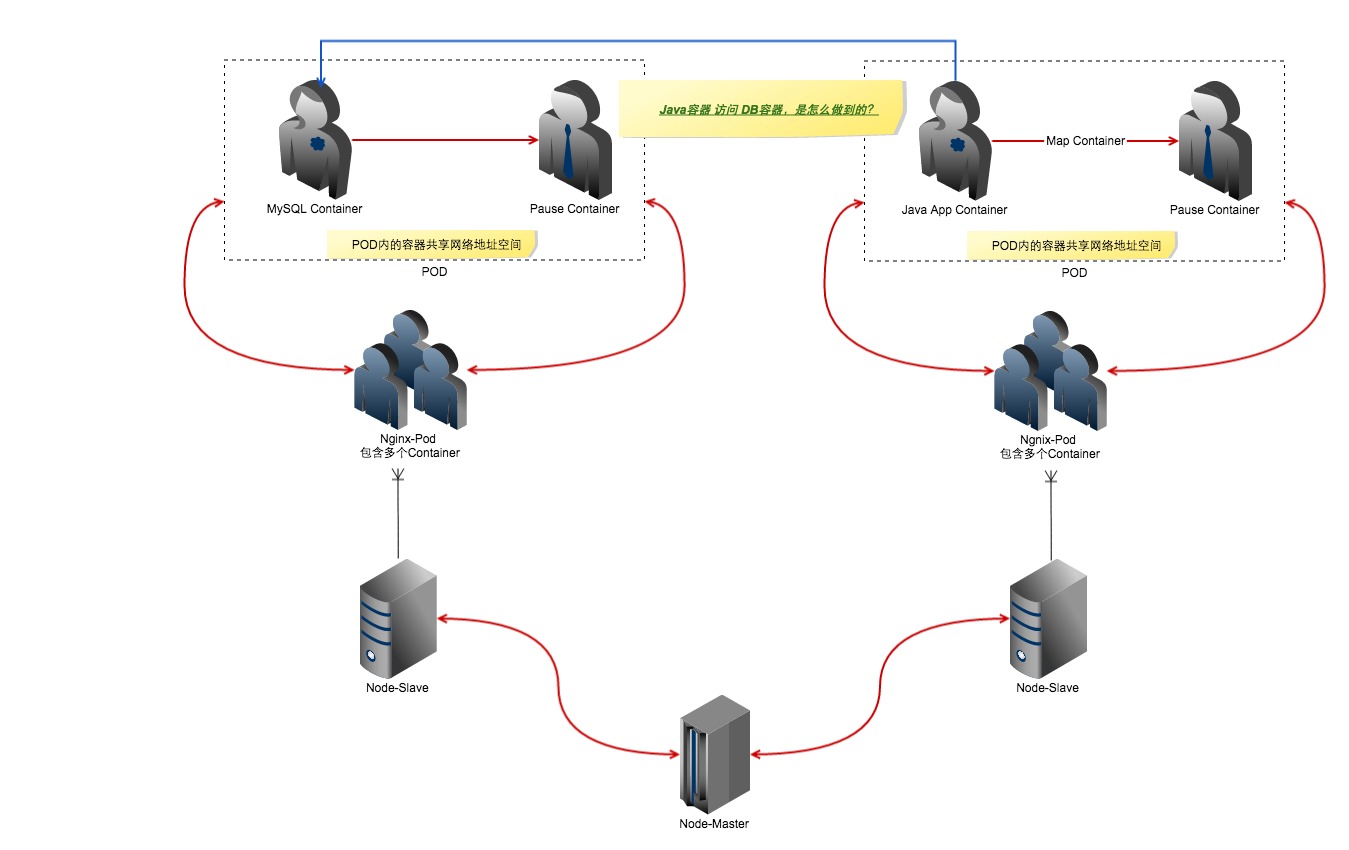

上图显示了Kubernetes的基本结构图。

Master管理多个Slave节点

Slave节点上面可以运行多个Pod

Pod可以部署多个副本,多个副本可以运行在不同的Node上

一个Pod可以包含多个Container,一个Pod内的Container共享同样的网络地址空间

最重要的是最后一句话:一个Pod内的Container共享同样的网络地址空间。这是通过Mapped Container做到的。

基本描述为下:

容器 A 的网络模式为正常docker的网络模式

容器 B 的网络模式为应用容器A的网络模式

###2.1 共享网络模式###

下面是一个例子来验证,我这里创建了一个busybox的Pod。

[root@centos7-node-221 ~]$ kubectl get po

NAME READY STATUS RESTARTS AGE

busybox 1/1 Running 224 9d

[root@centos7-node-221 ~]$ kubectl describe po busybox

Name: busybox

Namespace: default

Image(s): busybox

Node: centos7-node-226/192.168.1.226

Labels: <none>

Status: Running

Reason:

Message:

IP: 172.16.58.6

Replication Controllers: <none>

Containers:

busybox:

Image: busybox

State: Running

Started: Thu, 08 Oct 2015 08:20:30 -0400

Last Termination State: Terminated

Exit Code: 0

Started: Thu, 08 Oct 2015 07:20:26 -0400

Finished: Thu, 08 Oct 2015 08:20:26 -0400

Ready: True

Restart Count: 224

Variables:

Conditions:

Type Status

Ready True

Volumes:

default-token-lv94w:

Type: Secret (a secret that should populate this volume)

SecretName: default-token-lv94w

Events:

FirstSeen LastSeen Count From SubobjectPath Reason Message

9d 37m 225 {kubelet centos7-node-226} spec.containers{busybox} pulled Container image "busybox" already present on machine

37m 37m 1 {kubelet centos7-node-226} spec.containers{busybox} Created Created with docker id fc8580292210

37m 37m 1 {kubelet centos7-node-226} spec.containers{busybox} Started Started with docker id fc8580292210我们去192.168.1.226看下这个Pod和其Container.

[root@centos7-node-226 ~]$ docker ps | grep busybox fc8580292210 busybox "sleep 3600" 37 minutes ago Up 37 minutes k8s_busybox.62fa0587_busybox_default_86e98e8c-665f-11e5-af98-525400d7abb6_7f734c4d 02d259dc8ab5 gcr.io/google_containers/pause:0.8.0 "/pause" 9 days ago Up 9 days k8s_POD.7be6d81d_busybox_default_86e98e8c-665f-11e5-af98-525400d7abb6_ff9224f5

发现有两个容器,一个是pause容器,一个是busybox容器。其中pause容器为主网络容器,其他容器都共享pause容器的网络模式。我们分别看下其网络模式。下面是两个容器的网络模式。

[root@centos7-node-226 ~]$ docker inspect 02d259dc8ab5 | grep NetworkMode "NetworkMode": "bridge", [root@centos7-node-226 ~]$ docker inspect fc8580292210 | grep NetworkMode "NetworkMode": "container:02d259dc8ab59c1746d54d2df24d8733b2b9379a9fdfbfdc2066429b4a934a04", # 这个container的id号码就是上一个container的id的long形式

所以可以看到fc8580292210(busybox)使用的是pause容器的网络空间。

让我们进一步验证。

下面我在 192.168.1.224 搭建了一个dns的pod,里面有4个容器,共享一个网络空间,我们采用查看其ip地址、hostname和网络IO的方式来鉴定。 下面是容器的id号

[root@centos7-node-224 ~]$ docker ps | grep dns b00a08d078d6 dockerimages.yinnut.com:15043/skydns:2015-03-11-001 "/skydns -machines=h 8 hours ago Up 8 hours k8s_skydns.c878079e_kube-dns-v9-y05vd_kube-system_12725077-64c0-11e5-9309-525400d7abb6_46f95e60 4e843585b938 dockerimages.yinnut.com:15043/exechealthz:1.0 "/exechealthz '-cmd= 11 days ago Up 11 days k8s_healthz.8ab20f84_kube-dns-v9-y05vd_kube-system_12725077-64c0-11e5-9309-525400d7abb6_f7c469e5 296ff779abb2 dockerimages.yinnut.com:15043/kube2sky:1.11 "/kube2sky -domain=c 11 days ago Up 11 days k8s_kube2sky.2a46d768_kube-dns-v9-y05vd_kube-system_12725077-64c0-11e5-9309-525400d7abb6_349c7246 f0118fac6952 dockerimages.yinnut.com:15043/etcd:2.0.9 "/usr/local/bin/etcd 11 days ago Up 11 days k8s_etcd.64e02c2f_kube-dns-v9-y05vd_kube-system_12725077-64c0-11e5-9309-525400d7abb6_9235054b f281dbf1ec41 gcr.io/google_containers/pause:0.8.0 "/pause" 11 days ago Up 11 days k8s_POD.6e934112_kube-dns-v9-y05vd_kube-system_12725077-64c0-11e5-9309-525400d7abb6_a8ea96d0

我们查看前三个 b00a08d078d6 4e843585b938 296ff779abb2 的上述属性。

[root@centos7-node-224 ~]$ for id in b00a08d078d6 4e843585b938 296ff779abb2 ; do echo $id; docker exec $id cat /etc/hosts ; docker exec $id cat /etc/resolv.conf ; echo "" ; done b00a08d078d6 172.16.60.4 kube-dns-v9-y05vd 127.0.0.1 localhost ::1 localhost ip6-localhost ip6-loopback fe00::0 ip6-localnet ff00::0 ip6-mcastprefix ff02::1 ip6-allnodes ff02::2 ip6-allrouters nameserver 192.168.1.208 search 8.8.8.8 options ndots:5 4e843585b938 172.16.60.4 kube-dns-v9-y05vd 127.0.0.1 localhost ::1 localhost ip6-localhost ip6-loopback fe00::0 ip6-localnet ff00::0 ip6-mcastprefix ff02::1 ip6-allnodes ff02::2 ip6-allrouters nameserver 192.168.1.208 search 8.8.8.8 options ndots:5 296ff779abb2 172.16.60.4 kube-dns-v9-y05vd 127.0.0.1 localhost ::1 localhost ip6-localhost ip6-loopback fe00::0 ip6-localnet ff00::0 ip6-mcastprefix ff02::1 ip6-allnodes ff02::2 ip6-allrouters nameserver 192.168.1.208 search 8.8.8.8 options ndots:5

[root@centos7-node-224 ~]$ for id in b00a08d078d6 4e843585b938 296ff779abb2 ; do echo $id; docker exec $id ip a ; echo "" ; done b00a08d078d6 1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00 inet 127.0.0.1/8 scope host lo valid_lft forever preferred_lft forever 4e843585b938 1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00 inet 127.0.0.1/8 scope host lo valid_lft forever preferred_lft forever 296ff779abb2 1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00 inet 127.0.0.1/8 scope host lo valid_lft forever preferred_lft forever

[root@centos7-node-224 ~]$ for id in b00a08d078d6 4e843585b938 296ff779abb2 ; do echo $id; docker exec $id netstat -lan ; echo "" ; done b00a08d078d6 Active Internet connections (servers and established) Proto Recv-Q Send-Q Local Address Foreign Address State tcp 0 0 127.0.0.1:4001 0.0.0.0:* LISTEN tcp 0 0 127.0.0.1:2379 0.0.0.0:* LISTEN tcp 0 0 127.0.0.1:2380 0.0.0.0:* LISTEN tcp 0 0 127.0.0.1:7001 0.0.0.0:* LISTEN tcp 0 0 172.16.60.4:48582 10.254.0.1:443 ESTABLISHED tcp 0 0 127.0.0.1:4001 127.0.0.1:35394 ESTABLISHED tcp 0 0 172.16.60.4:48584 10.254.0.1:443 ESTABLISHED tcp 0 0 127.0.0.1:60161 127.0.0.1:2379 ESTABLISHED tcp 0 0 127.0.0.1:51445 127.0.0.1:4001 ESTABLISHED tcp 0 0 127.0.0.1:4001 127.0.0.1:51445 ESTABLISHED tcp 0 0 127.0.0.1:35550 127.0.0.1:4001 ESTABLISHED tcp 0 0 127.0.0.1:35394 127.0.0.1:4001 ESTABLISHED tcp 0 0 127.0.0.1:2379 127.0.0.1:60161 ESTABLISHED tcp 0 0 127.0.0.1:4001 127.0.0.1:51433 ESTABLISHED tcp 0 0 127.0.0.1:51433 127.0.0.1:4001 ESTABLISHED tcp 0 0 127.0.0.1:4001 127.0.0.1:35550 ESTABLISHED Active UNIX domain sockets (servers and established) Proto RefCnt Flags Type State I-Node Path 4e843585b938 Active Internet connections (servers and established) Proto Recv-Q Send-Q Local Address Foreign Address State tcp 0 0 127.0.0.1:4001 0.0.0.0:* LISTEN tcp 0 0 127.0.0.1:2379 0.0.0.0:* LISTEN tcp 0 0 127.0.0.1:2380 0.0.0.0:* LISTEN tcp 0 0 127.0.0.1:7001 0.0.0.0:* LISTEN tcp 0 0 172.16.60.4:48582 10.254.0.1:443 ESTABLISHED tcp 0 0 127.0.0.1:4001 127.0.0.1:35394 ESTABLISHED tcp 0 0 172.16.60.4:48584 10.254.0.1:443 ESTABLISHED tcp 0 0 127.0.0.1:60161 127.0.0.1:2379 ESTABLISHED tcp 0 0 127.0.0.1:51445 127.0.0.1:4001 ESTABLISHED tcp 0 0 127.0.0.1:4001 127.0.0.1:51445 ESTABLISHED tcp 0 0 127.0.0.1:35550 127.0.0.1:4001 ESTABLISHED tcp 0 0 127.0.0.1:35394 127.0.0.1:4001 ESTABLISHED tcp 0 0 127.0.0.1:2379 127.0.0.1:60161 ESTABLISHED tcp 0 0 127.0.0.1:4001 127.0.0.1:51433 ESTABLISHED tcp 0 0 127.0.0.1:51433 127.0.0.1:4001 ESTABLISHED tcp 0 0 127.0.0.1:4001 127.0.0.1:35550 ESTABLISHED Active UNIX domain sockets (servers and established) Proto RefCnt Flags Type State I-Node Path 296ff779abb2 Active Internet connections (servers and established) Proto Recv-Q Send-Q Local Address Foreign Address State tcp 0 0 127.0.0.1:4001 0.0.0.0:* LISTEN tcp 0 0 127.0.0.1:2379 0.0.0.0:* LISTEN tcp 0 0 127.0.0.1:2380 0.0.0.0:* LISTEN tcp 0 0 127.0.0.1:7001 0.0.0.0:* LISTEN tcp 0 0 172.16.60.4:48582 10.254.0.1:443 ESTABLISHED tcp 0 0 127.0.0.1:4001 127.0.0.1:35394 ESTABLISHED tcp 0 0 172.16.60.4:48584 10.254.0.1:443 ESTABLISHED tcp 0 0 127.0.0.1:60161 127.0.0.1:2379 ESTABLISHED tcp 0 0 127.0.0.1:51445 127.0.0.1:4001 ESTABLISHED tcp 0 0 127.0.0.1:4001 127.0.0.1:51445 ESTABLISHED tcp 0 0 127.0.0.1:35550 127.0.0.1:4001 ESTABLISHED tcp 0 0 127.0.0.1:35394 127.0.0.1:4001 ESTABLISHED tcp 0 0 127.0.0.1:2379 127.0.0.1:60161 ESTABLISHED tcp 0 0 127.0.0.1:4001 127.0.0.1:51433 ESTABLISHED tcp 0 0 127.0.0.1:51433 127.0.0.1:4001 ESTABLISHED tcp 0 0 127.0.0.1:4001 127.0.0.1:35550 ESTABLISHED Active UNIX domain sockets (servers and established) Proto RefCnt Flags Type State I-Node Path

下面分析下最复杂的Container之间的通信。

先说最简单的, Pod内的Container通信,由于共享网络地址空间,直接访问127.0.0.1即可。

例子: 192.168.1.224的fluentd-elasticsearch容器要连接192.168.1.223的elasticsearch-logging容器。

192.168.1.223的elasticsearch-logging容器及其IP地址:

[root@centos7-node-223 ~]$ docker ps |grep elasticsearch-logging 667cfd84c979 dockerimages.yinnut.com:15043/elasticsearch:1.7 "/run.sh" 12 days ago Up 12 days k8s_elasticsearch-logging.89fda9f_elasticsearch-logging-v1-i8x6q_kube-system_8b558d2c-62a3-11e5-9d7b-525400d7abb6_2a02a2c8 5201c8cbdebd gcr.io/google_containers/pause:0.8.0 "/pause" 12 days ago Up 12 days k8s_POD.8ecd2043_elasticsearch-logging-v1-i8x6q_kube-system_8b558d2c-62a3-11e5-9d7b-525400d7abb6_4022db35 [root@centos7-node-223 ~]$ docker inspect 5201c8cbdebd |grep IPAddress "IPAddress": "172.16.77.4", #IP地址 "SecondaryIPAddresses": null,

可以看到elasticsearch-logging的容器的Pod的IP地址为172.16.77.4

192.168.1.223的fluentd-elasticsearch容器及其IP地址:

[root@centos7-node-224 ~]$ docker ps |grep fluentd-elasticsearch d326d81468b5 gcr.io/google_containers/fluentd-elasticsearch:1.11 "td-agent -q" 12 days ago Up 12 days k8s_fluentd-elasticsearch.27a08aa3_fluentd-elasticsearch-centos7-node-224_kube-system_7dcc6ce562f3742190a876fda85e2359_58c54ef3 f9b76639d241 gcr.io/google_containers/pause:0.8.0 "/pause" 12 days ago Up 12 days k8s_POD.7be6d81d_fluentd-elasticsearch-centos7-node-224_kube-system_7dcc6ce562f3742190a876fda85e2359_333e52c0 [root@centos7-node-224 ~]$ docker inspect f9b76639d241 | grep IPAddress "IPAddress": "172.16.60.2", "SecondaryIPAddresses": null,

可以看到fluentd-elasticsearch的容器的Pod的IP地址为172.16.60.2

我们看下 fluentd-elasticsearch 的网络连接情况

[root@centos7-node-224 ~]$ docker exec d326d81468b5 netstat -nla | grep 172.16.77.4 tcp 0 0 172.16.60.2:56354 172.16.77.4:9200 TIME_WAIT tcp 0 0 172.16.60.2:56350 172.16.77.4:9200 TIME_WAIT tcp 0 0 172.16.60.2:56347 172.16.77.4:9200 TIME_WAIT tcp 0 0 172.16.60.2:56357 172.16.77.4:9200 TIME_WAIT tcp 0 0 172.16.60.2:56344 172.16.77.4:9200 TIME_WAIT tcp 0 0 172.16.60.2:56352 172.16.77.4:9200 TIME_WAIT

可以看到其的确是连接了 172.16.77.4 的9200端口。 而对方 elasticsearch-logging 容器的确开启了9200端口

[root@centos7-node-223 ~]$ docker exec 667cfd84c979 ss -l|grep LISTEN tcp LISTEN 0 50 :::9200 :::* tcp LISTEN 0 50 :::9300 :::*

那么这个过程是如何完成的呢???

192.168.1.224/fluentd-elasticsearch -> 192.168.1.223/elasticsearch-logging

192.168.1.224/fluentd-elasticsearch 需要连接到elasticsearch-logging容器.

域名到IP对应。 elasticsearch-logging -> 解析为10.254.24.205

root@fluentd-elasticsearch-centos7-node-224:/$ dig elasticsearch-logging ; <<>> DiG 9.9.5-3ubuntu0.5-Ubuntu <<>> elasticsearch-logging ;; global options: +cmd ;; Got answer: ;; ->>HEADER<<- opcode: QUERY, status: SERVFAIL, id: 39181 ;; flags: qr rd ra; QUERY: 1, ANSWER: 0, AUTHORITY: 0, ADDITIONAL: 0 ;; QUESTION SECTION: ;elasticsearch-logging. IN A ;; Query time: 1 msec ;; SERVER: 10.254.0.10#53(10.254.0.10) ;; WHEN: Fri Oct 09 06:04:35 UTC 2015 ;; MSG SIZE rcvd: 39

访问该IP地址10.254.24.205:9200端口。根据路由,请求将会到达网关172.16.60.1,也就是这个docker的宿主机的docker0网卡地址。

# 容器内 root@fluentd-elasticsearch-centos7-node-224:/$ ip route default via 172.16.60.1 dev eth0 172.16.60.0/24 dev eth0 proto kernel scope link src 172.16.60.2 # 物理机 [root@centos7-node-224 ~]$ ifconfig docker0 docker0: flags=4163<UP,BROADCAST,RUNNING,MULTICAST> mtu 1450 inet 172.16.60.1 netmask 255.255.255.0 broadcast 0.0.0.0 inet6 fe80::5484:7aff:fefe:9799 prefixlen 64 scopeid 0x20<link> ether 56:84:7a:fe:97:99 txqueuelen 0 (Ethernet) RX packets 10182154 bytes 1777103288 (1.6 GiB) RX errors 0 dropped 0 overruns 0 frame 0 TX packets 11195534 bytes 2271907616 (2.1 GiB) TX errors 0 dropped 0 overruns 0 carrier 0 collisions 0

iptables负责转发请求到192.168.1.224:36967,而这个端口上kube-proxy进程在监听。

[root@centos7-node-224 ~]$ iptables-save | grep 10.254.24.205 |grep 9200 -A KUBE-PORTALS-CONTAINER -d 10.254.24.205/32 -p tcp -m comment --comment "kube-system/elasticsearch-logging:" -m tcp --dport 9200 -j REDIRECT --to-ports 36967 -A KUBE-PORTALS-HOST -d 10.254.24.205/32 -p tcp -m comment --comment "kube-system/elasticsearch-logging:" -m tcp --dport 9200 -j DNAT --to-destination 192.168.1.224:36967 [root@centos7-node-224 ~]$ netstat -nlp|grep 36967 tcp6 0 0 :::36967 :::* LISTEN 930/kube-proxy

谁负责响应10.254.24.205:9200的请求?由上述分析,看起来是kube-proxy,那么kube-proxy进程看起来是个proxy,那么被转发给谁处理?当然给Pod啦。可以到这个服务的Selector是k8s-app=elasticsearch-logging

[root@centos7-node-224 ~]$ kubectl get svc --all-namespaces | grep 10.254.24.205 kube-system elasticsearch-logging 10.254.24.205 <none> 9200/TCP k8s-app=elasticsearch-logging 14d

找到其对应的Pod为elasticsearch-logging-v1-gph5i和elasticsearch-logging-v1-i8x6q

[root@centos7-node-224 ~]$ kubectl get po -l k8s-app=elasticsearch-logging --all-namespaces NAMESPACE NAME READY STATUS RESTARTS AGE kube-system elasticsearch-logging-v1-gph5i 1/1 Running 6 14d kube-system elasticsearch-logging-v1-i8x6q 1/1 Running 5 14d

我们查看其中的elasticsearch-logging-v1-i8x6q容器的IP地址,发现为172.16.77.4

[root@centos7-node-224 ~]$ kubectl describe po elasticsearch-logging-v1-i8x6q --namespace=kube-system | grep IP IP: 172.16.77.4

故而很明显kube-proxy 会把部分请求转发给 其中的一个Pod来处理,而这个Pod的IP地址是172.16.77.4 . 而 172.16.77.4 这个Pod 在 192.168.1.223 机器上.

[root@centos7-node-223 ~]$ docker inspect 5201c8cbdebd | grep IPAddress "IPAddress": "172.16.77.4", "SecondaryIPAddresses": null,

那如何与172.16.77.4进行通信呢?跨机器之间通信则采用flannel等诸如此类的overlay网络或者ovs等L2网络。

以上是“Kubernetes网络分析中如何实现Container间通信”这篇文章的所有内容,感谢各位的阅读!希望分享的内容对大家有帮助,更多相关知识,欢迎关注亿速云行业资讯频道!

免责声明:本站发布的内容(图片、视频和文字)以原创、转载和分享为主,文章观点不代表本网站立场,如果涉及侵权请联系站长邮箱:is@yisu.com进行举报,并提供相关证据,一经查实,将立刻删除涉嫌侵权内容。